A 15-Year-Old’s Grounded Take on Technology

Huiling is a 15-year-old Secondary 3 student, preparing to sit her O Levels the following year. She lives with her parents and an older brother who is completing his A Levels. One of her parents is an engineer; her mother holds a polytechnic qualification. Notably, Huiling does not currently use any AI or robot technologies in her daily life — and this shapes her perspective in interesting ways. She doesn’t follow AI news, has no media references to draw on, and relies on her direct, practical instincts when assessing what robots should and shouldn’t do.

AI? Not much. But robots — all I can think of is I just hope they can help in the future with daily chores and the more labour-intensive work at home.

A Practical Vision of the Future

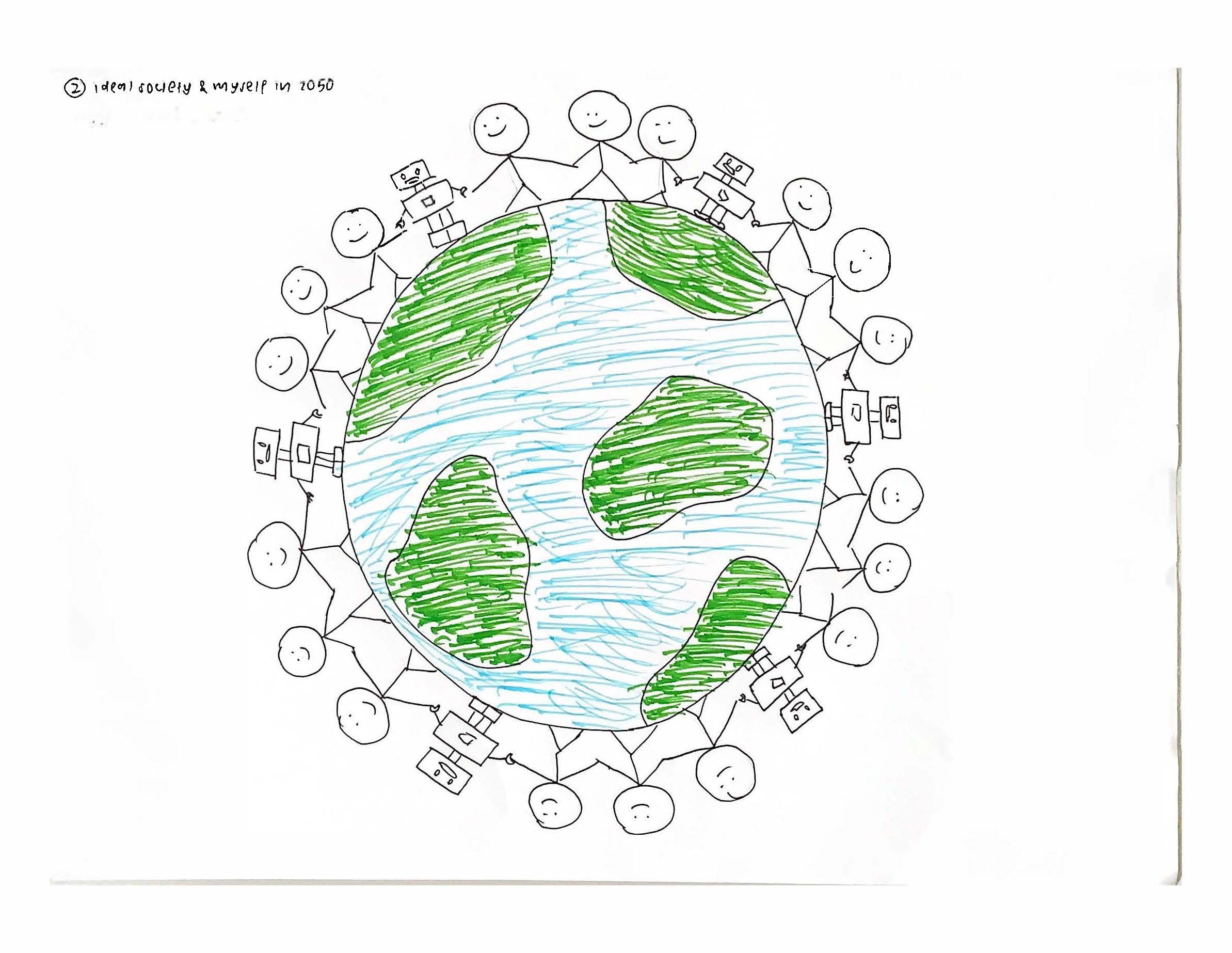

By 2050, Huiling’s personal goals are clear: a good job, financial security, and a comfortable life. She is drawn to high-earning fields such as investing, business, or accounting. She expects life to be more technologically advanced, but she holds ambivalent feelings about robots becoming more commonplace — particularly in public spaces and daily interactions.

I want it to be like it is now, where robots aren’t really involved in anything. But I think robots will be weird — they’re kind of scary.

Her reservation is rooted in authenticity: robots lack real feelings, and even if emotion could somehow be generated artificially, it would still feel fake. This wariness runs through much of her thinking about AI and social roles.

What Robots Can Do — With Limits

Despite her unease, Huiling identifies clear areas where robots would be welcome. At home, she wants them for all manner of housekeeping: cleaning, bed-making, dishwashing, mopping and sweeping. In restaurants, she is in favour of robot waiters — she observes that existing robots at a local club restaurant serve food from trays effectively, with fewer mistakes than human staff. She is also comfortable with automated cars (if affordable), drone delivery (with precise ETAs), and biometric identification systems at borders and in public spaces.

In hospitals, she limits robot involvement to medicine preparation and basic measurements — tasks already largely handled by machines. She does not want robots performing consultations or operations, citing the risk of malfunction.

What if the robot malfunctions during an operation? That is very dangerous.

People Over Algorithms

On questions of judgment, care, and human connection, Huiling is consistently sceptical of AI. She does not want AI teachers (too inflexible for the range of student questions), robot childcare (children need to be raised with human feeling), or AI management of workers (unnecessary, since performance is visible in the work itself). She also rejects AI judges, political decision-making, and international judicial processes.

For elderly care, she is cautious: while robots might tidy a room or assist with basic tasks, she doubts whether older generations would be comfortable with robot caregivers. Similarly, she does not want AI caring for pets beyond occasional walks or clean-up duties — the whole point of having a pet, she argues, is to care for it yourself.

On assessment and qualifications, she is more open: AI grading of multiple-choice tests, entrance interview processes free from human bias, and passport scanning at borders all seem reasonable to her.

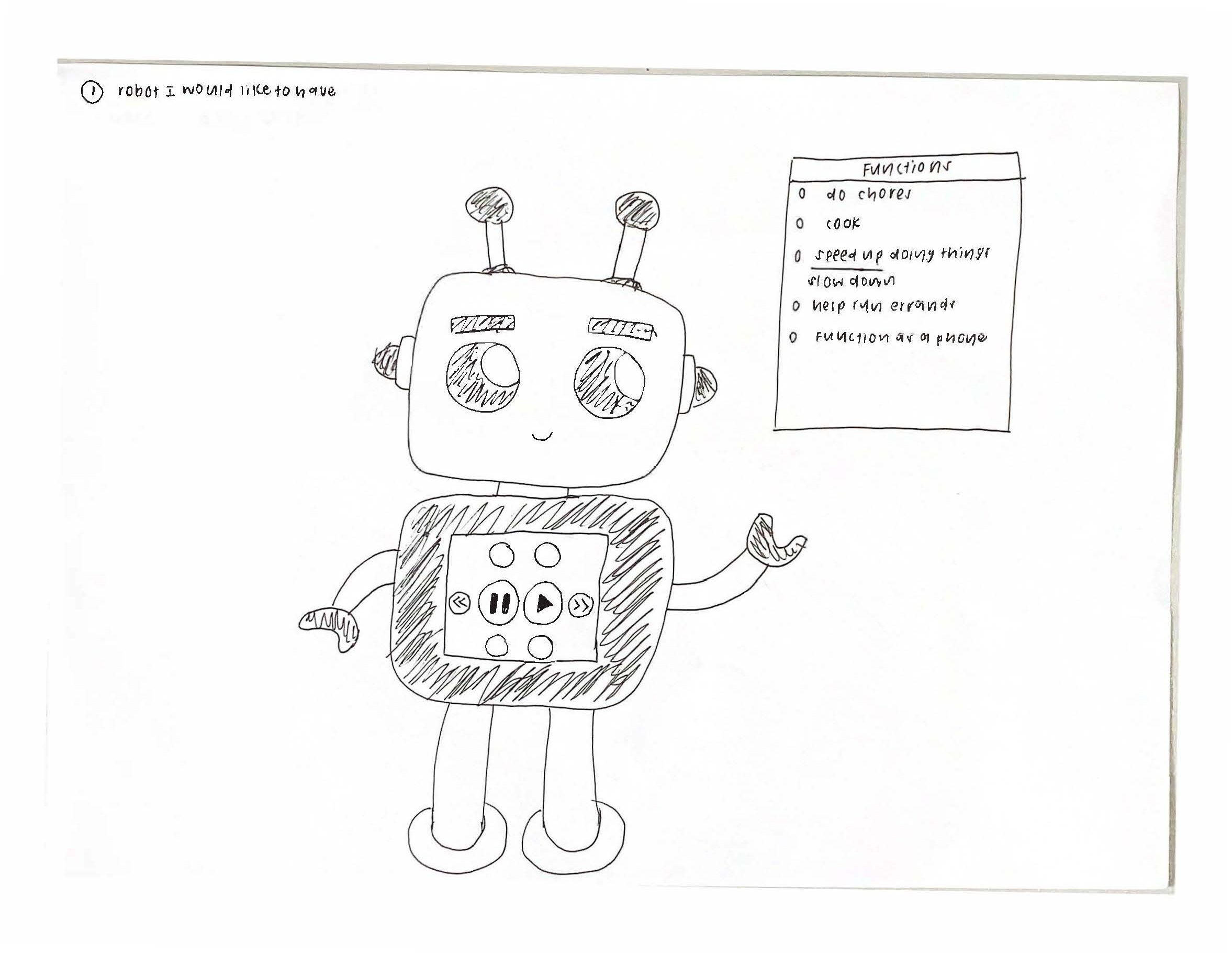

What She Wants in a Robot

Asked to describe her ideal robot, Huiling is specific: she wants it to look like a cartoon character — something like Pikachu or Doraemon — boxy and non-threatening. A humanoid robot would be “creepy.” Above all, she wants accuracy and comfort. She is not interested in AI-generated art, has no desire for intelligence augmentation, and trusts current AI systems very little, largely because she has never needed to use them.

They’re just not able to understand humans. They’re not able to connect with humans enough.