Meiling is a 21-year-old senior majoring in sociology at a university in China. She lives at home with her parents and grandparents, and her academic background has clearly shaped how she thinks about AI — analytically, with a sociologist’s eye on structure, class, and power. She already has a cautious relationship with smart technology: she stopped using the voice assistant on her phone after worrying it might eavesdrop on her, and the only AI she consistently uses at home is a sweeping robot.

Growing Up With Data — and Doubts

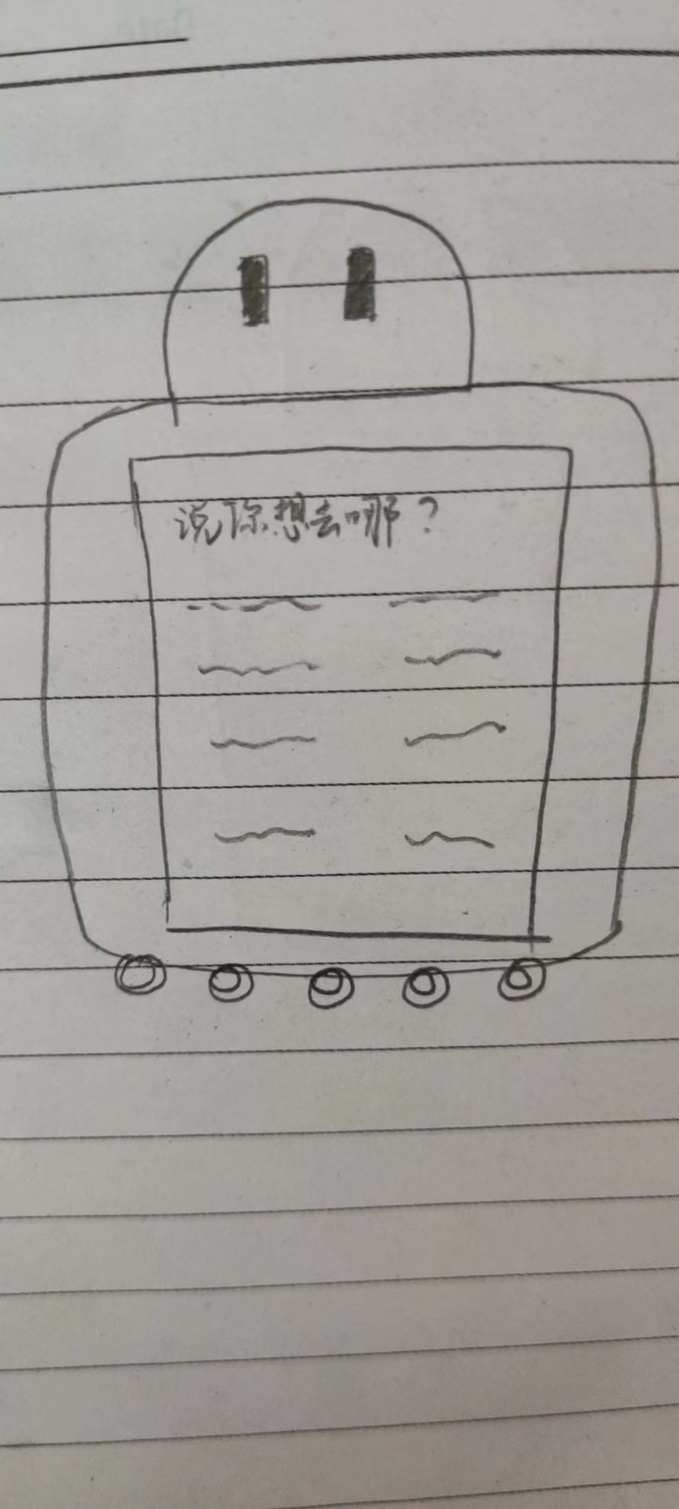

Meiling is not hostile to technology, but she came to it with her guard up early. The voice assistant episode was formative: once she decided the convenience was not worth the privacy risk, she switched it off and never looked back. Her continuing use of the sweeping robot, by contrast, feels safe — a machine doing a physical job with no access to her conversations. This pattern of acceptance-with-limits runs through her entire view of AI. She trusts its functional outputs when she can see how the data flows; she withdraws trust the moment the process becomes opaque. “I trust their ability to work,” she says, “but in terms of privacy disclosure, I don’t trust it.”

A Future Shaped by Class Structure

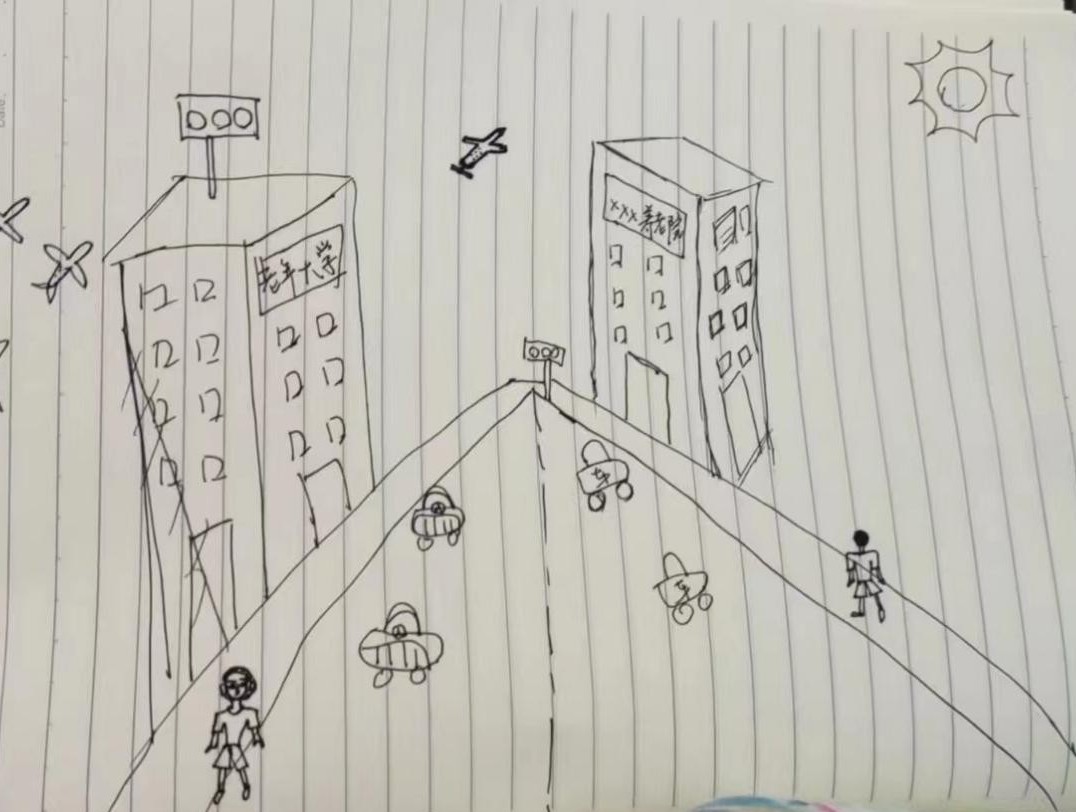

When Meiling pictures 2050, her sociology training is immediately visible. She anticipates a shift toward an olive-shaped class structure, with white-collar workers and senior technicians becoming the social mainstream. She expects intelligent technology to have penetrated everyday life thoroughly — and she flags the consequence most analysts overlook: people who are not fluent in that technology will find life measurably harder. She does not frame this as a reason to slow AI development; rather, she sees it as a challenge of social design. Her personal 2050 looks comfortable and self-directed: she wants to become a university lecturer, achieve financial independence, and travel the world.

At Home, in School, and in the Hospital

Meiling maps out a fairly clear division of labour for AI applications. At home, robots are welcome for housework and basic childcare support — though she is clear that emotional upbringing must remain human. She is supportive of AI-assisted elder care, especially for the physically practical elements, while noting that a couple living together still needs emotional company that machines cannot provide. For teaching, she accepts AI as a platform or intermediary — useful for transmitting technical knowledge like data-processing tools — but draws a line at humanities and arts, where the “humanistic flavour,” as she puts it, cannot be replicated. In medical settings, she is relatively open: more precise than humans for tasks like injections or medication changes, acceptable for prescribing and basic services, though she remains uncertain about complex surgery. For exam invigilation, she sees genuine advantages, and thinks standardised-answer marking is fine; she is more cautious about open-ended questions.

Work, Education, and What Comes Next

In the workplace, Meiling consistently prefers human judgment over algorithmic screening. AI-driven recruitment, she argues, captures only keywords and cannot assess the kind of face-to-face understanding that makes hiring meaningful — and it creates easy opportunities for candidates to game the system by saying what the program wants to hear. She is more flexible about the future: if AI interviews become mainstream, she would adapt. But she is clear that the current logic of career-shaping — references, recommendations, gut-level human assessment — is hard to replicate with code. On the broader question of jobs, she sees AI as a driver that will reduce low-end work while creating high-end roles, and insists that the transition, though painful, is unavoidable. The responsibility, she believes, is to build social support structures that cushion the displaced.

Where AI Belongs — and Where It Doesn’t

Despite her caution, Meiling’s overall stance is positive. She frames AI as a good helper in human life — a subordinate tool, not an autonomous agent. She is comfortable with AI in translation, environmental monitoring, and data collection for policy support. She is sceptical about crime prediction, where past behaviour is a flawed proxy for future intent. And she is firmly opposed to AI making complete political or judicial decisions, because society, in her view, is a whole whose complexity exceeds what any data model can capture. “AI may separate society into elements,” she says, “but society is a whole, and it still needs people to consider it comprehensively.” The distinction between using AI as a tool and letting AI make decisions is the line she returns to again and again.